I have a long commute to work. It takes me about 90 minutes each way if I am taking public transportation, about an hour if I take the car. There are some personal reasons on why I live so far away from work, but I am neither sad or stressed about it. Those 2-3 hours I spend on the road are wonderful times in which I either get caught up on my TED Talks, Jon Stewarts and Colbert Reports or in which I listen to podcasts when I’m in the car.

One of these podcasts is Econtalk with Russ Roberts. It deals with more than the pure money economics that is “Planet Money“, but is way more scientifically rigorous than “Freakonomics“, which is more entertainment than anything else. The podcast is quite libertarian, with a healthy does of skepticism towards the government, but it isn’t preachy or even biased. Russ does a good job of moderating the issues, he’s always well prepared and I like his insights into all aspects of economics. He’s made me be a believer of Hayek (and Mieses to some extent) and appreciate the nuances of Friedman’s policies. His podcasts with Mike Munger are entertaining, but some of the most conflicted I have been when hearing a podcast.

And because econtalk is about the social sciences in general, not just about finance, a few weeks ago he had Brian Nosek on to talk about his latest paper: Scientific Utopia: II – Restructuring Incentives and Practices to Promote Truth Over Publishability. I was very impressed with this podcast not only because of the interesting topic, but because I saw many traits of this among my astronomer friends, even though we are in the hard, natural sciences, not in the social sciences. If you can spare an hour, I implore you to listen to the podcast, I think it is very important to be aware of our unconscious biases when we are doing science and finding publishable results.

The main and salient point that Nosek raises is that we as scientists try to genuinely do good science but that does not mean that we aren’t vulnerable to some of the reasoning and realizations that leverage the incentives to be successful at science. Read: we need to make sure to get published, so we have a hidden bias to pursue publishable results (journals will not publish null results). I mean, if I get data at a telescope, I’m expected to publish a paper on that data. To massage some scientific insight out of there can often be quite difficult, but the incentive to publish is there.

Russ reads aloud two of his quotes and I think they are so good that they bear repeating:

“The real problem is that that the incentives for publisheable results can often be at odds with the incentives for accurate results. This produces a conflict of interest. The conflict may increase the likelihood of design, analysis and reporting decisions that inflate the proportion of false results in the published literature.”

“Publishing is also the basis of a conflict of interest between personal interests and the objective of knowledge accumulation. The reason? Published and true are not synonmyms. To the extent that publishing itself is rewarded then it is in scientist’s personal interest to publish regardless of whether the published findings are true.”

The sentence that published and true are not synonyms is the heart of the problem and it is a pretty depressing idea, too. We like to see ourselves as the truthseekers, but personal interests can derail this. Incentives matter, certain kinds of results are valued more than others. We are subtly influenced to take the path that is most beneficial to our career, i.e. seek out results that I can publish. But does that push our analysis in a certain way?

Nosek identifies 9 tricks or “things we do” where our bias shows:

- – leverage chance by running many low powered studies than a few high powered ones.

- – Uncritically dismiss failed studies as pilots or due to methodological flaws but uncritically accept successful studies as methodologically sound

- – selectively report studies with positive results and not studies with negative results every time

- – stop data collection as soon as a reliable effect is obtained

- – continue data collection until a reliable effect is obtained

- – include multiple independent or dependent variables and report the subset that worked

- – Maintain flexibility in design and analytical models, including the attempt of a variety of data exclusion or transformation methods for to subset

- – Report a discovery as if it had been the result of an exploratory test

- – once a reliable test is obtained, do not do a direct replication; shame on them.

Now you may say, Tanya, that is all fine and dandy, but it only applies to the social sciences. Well, unfortunately no. I have seen the above over and over again in astronomy, too. When some observation does not agree with the model the scientists who proposed suddenly dismisses it, many projects are lying around in drawers, because “the discovery” didn’t pan out. But even worse, when an observation begins to even show signs of agreeing with the proposed, it gets sent to a prestigious journal, even if there isn’t critical statistical mass – hey it’s a pilot study, but it’s *really* important!

What do we do? Fix the journal / peer review system? Journals have own sets of incentives that encourage publications. They want attention and prestige, be at the forefront of innovations. So can’t do that. Should we maybe fix the university system? Can’t do that either, because here we face the same challenges. The way that universities gain prestige is the same as journals, so they don’t have the incentive to change either. Nosek suggests to rather start from the bottom up, from the practices of the single scientist to be accountable rather than from the top.

At the boundaries, we will have risk, we will be wrong a lot. That’s what science is supposed to do. But what happens is that by that design the discovery component suddenly outweighs the other side of science which is verification, which is kind of boring. Verification is just as important, but not as exciting! We value innovation more. Just last week this was my facebook status update:

“We had a big discussion at our conference dinner yesterday about science, its history and its impact. Yes, there was wine flowing :). One person in the group stated matter-of-factly “the biggest driver in science is discovery!”. Fortunately, I was not the only one who disagreed and vehemently at that. We need to get away from this thinking – science is incremental! It’s important that we assign glory to the people checking results, improving statistics, interpreting data, improving on some technique etc. as to the people who discover something “first”!” with a link to an ode to incremental research.

By the way, the person in the group is a brilliant scientist, I was rather perplexed by this attitude, but I am finding more and more, that it is prevalent among astronomy. We will face a big challenge is in trying to rebalance this. Can we find some incentives to make verification more interesting and provocative? It’s gonna be difficult. By the way, verification is not attacking the original scientist, disagreements over a subject does not mean that science is broken, that’s actually how science works! You gather some evidence here, somebody tries to disprove it there and then you converge on a result. Well, if that’s how science works, won’t it self correct? Isn’t then discovery the main driver still? Only eventually; it takes a very, very long time! That’s not ok! We can correct and clarify quicker and more efficiently.

So what are the suggestions to counter that thinking. In the podcast they delve into a nice anecdote about a study that was about people acting slower whenever they heard about old age. When the study couldn’t replicate, the original scholar just scowled: “well, you didn’t do it right!”. In the original paper they even reproduced the result, so obviously there was some methodology that was giving them those results. They are still talking about psychology, but even in astronomy, we have our way of doing things. What is a speck, some background variability to somebody is a Lyman alpha blob at a sigma of 2.5 at extremely high redshift to somebody else. Some people have great observing ability, positioning the slit exactly right, some are masters of getting that last little photon out of the data. But when is the science gotten from that photon reproducible? There is often so much subtlety and nuance! Failing to replicate also does not mean that the original result is false!

So the recipe is actually quite simple: be accountable! Describe each detail of the methods. I like the approach that people are putting their scripts now online via the VO or github (e.g. https://github.com/nhmc/H2) to really make their methods transparent and accessible. Some people provide diaries to their colleagues / collaborators on what they do (I know, I have and I find the ones of others extremely helpful as some sort of cookbook), if we could make this even more open it would be great – documenting your workflow is of real value! Every researcher does more research than he/she actually publishes. There may be diamonds in the rough there if all that data is open as opposed to the biased representation in the published literature.

This way of opening up methods also raises and could correct another thing: when you write a proposal, you already have the expectation of a confirmatory stance. So to correct for the strong expectation of a result you can present the tools you will use to analyze the data. This is why simulations of data in proposals are encouraged. When the data comes in, all you have to do is to run it through the already developed tools and just confirm or deny your hypothesis without fiddling, adjusting or even fudging the output. Register the analysis software in advance, it reduces your degrees of freedom, but makes you fairer, even if the data may not look as pretty. Better yet, have two competing camps work on an analysis script together!

Last, but not least, there is some fame and glory for shooting studies down. But only for the really famous ones. For the simple data analysis paper, we are often met hesitantly: “Why do you feel the need to question that result?”. But it actually is quite doable for high impact studies. This makes it so that one doesn’t constantly replicate things and will actually discover things, too, but it at least makes sure that the most “high impact” results are validated.

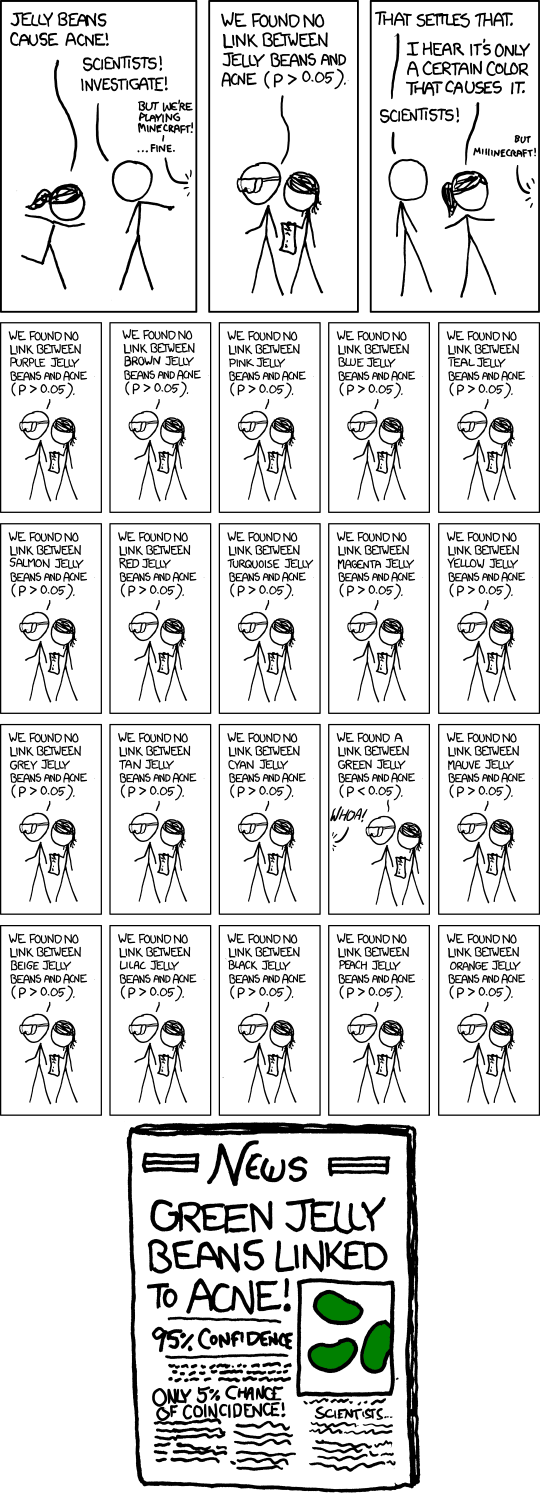

These are issues that have been of concern for years. Scientists don’t want to waste their time on things that aren’t true, so obviously they want to get at the heart of the problem. It’s actually great that people are looking critically at the research methods that scientists use. Even just knowing that we might have a skewed view in published results is valuable in it by itself. So if you made it this far, I have now made you aware of even another unconscious bias we carry around and need to account for. I leave you with a funny comic from xkcd, that a commenter on the podcast linked to – very relevant to the discussion.